Late into Tuesday evening, Jake Tapper of CNN said that if Trump wins the election, "it's going to put the polling industry out of business." Well, Trump won the election, and not surprisingly, many have said my industry is in crisis. That's understandable. A Clinton victory seem like a sure thing.

But was it? In my opinion, the polls were right. The interpretation of them was wrong.

Polls released over the final weekend and into Monday suggested that Hillary Clinton was leading nationally by three to four points over Donald Trump. And all of the election prediction sites said that she was the favourite to win with probabilities ranging from 71 per cent from Nate Silver's fivethirtyeight.com to 85 per cent from the New York Times Upshot to 87 per cent from Dailykos to 98 per cent from Huffington Post Pollster. But there are important differences in what each of these models were saying.

Nate Silver and his team at fivethirtyeight.com deserve kudos for building a more cautious model. He estimated that Trump had about a 30 per cent chance of winning the election. Those are not great odds, and he certainly wasn't the favourite, but there was still a good chance he could win. Given the polls, we should have all said, as I did the Monday before the election, Hillary is the favourite, but Trump could win.

One more point here. We need to also recognize that there was no difference in polling error between the 2012 poll and 2016 polls. Barrack Obama did about two points better on election day than the pre-election polls suggested. The only difference was the polls were pointing to an Obama victory, most pundits and predictions expected an Obama victory, and that's what happened. But they still underestimated his margin of victory. The story the day after the election was not "the polling business is going out of business," even though the polls "missed the mark" about the same as they did in 2016.

Let me be as clear as I can: The polls did not fail.

In the end, Donald Trump won the election. He won the electoral college but lost the popular vote. At the time of writing this, millions of votes are still uncounted in California, Washington and other states. Nate Silver estimates that Clinton's lead in the popular vote could grow to one to two percentage points after all the remaining votes are counted.

Let me be as clear as I can: The polls did not fail. This was not a polling disaster. If anything, it was a failure of interpretation.

Survey research is fundamentally about probabilities (for a good primer on this, I highly recommend this article). Much like forecasting weather events, the ebbs and flows of the stock market, or sports betting, survey research is about making informed estimates about something, be it public opinion, voting behaviour or the likelihood to purchase one brand of car over another. To do this, we interview a sample of a population and make inferences about that population about what we see in the sample.

The tools we use to measure public opinion or behaviour cannot and should not regularly produce estimates of vote choices to the decimal place. When they do, that's pure luck. Believing that this is even possible is a fundamental misunderstanding of probability theory and the science behind survey research. We should expect some error in the estimates and the error we saw in American polls last week, I believe, is acceptable.

And let's keep some perspective on what the science of survey research can accomplish.

Every time I get on an airplane, I'm in awe by the fact that we have figured out how to get that heavy metal object to fly around the world. There's an almost perfect chance that each time that plane takes off, it will land safely at its destination.

In the same way, every time I conduct a survey, I'm in awe of its ability to measure the intentions and opinions of large populations using a small sample. Shouldn't we be amazed that we can understand the views and intentions of over 300 million people by conducting a survey of just 1,000 people? Think about that for a moment.

A small shift in turnout or an error in the poll estimates could have easily produced the outcome we saw and defied our expectations.

Instead of celebrating the fact that we can produce such good estimates about how people will vote in what was one of the craziest, most unpredictable elections in American history, too many, especially those in the news media, deride the polls as factious, flawed and failures. It's ironic given these same news organizations rely so heavily on these same polls to help tell the story of the election, to understand how people are responding to the news of the day.

The American Association of Public Opinion Research's conclusion that "the polls clearly got it wrong this time" is simply not true. Our interpretation of the polls was wrong. Many of the projection models were wrong. But the polls, at least this time, were darn good.

For those interested in how I came to these conclusions, read my analysis below.

In the United States (unlike in Canada where we release "decided or committed voter estimates") most pollsters include the respondents to the survey who say they are "undecided" or "don't want to tell" who they are voting for in. This means that if you add up only the vote estimates for Clinton, Trump, Johnson, Stein and other candidates, it won't add up to 100. That's because those results also include respondents who said they were undecided or didn't want to disclose who they voted for.

For example, the final Bloomberg poll by well-respected pollster Ann Selzer had Clinton ahead by three points (46 per cent to 43 per cent) with five per cent saying they would be voting for another candidate, one per cent not sure, and five per cent saying they didn't want to say who they were voting for. If we recalculate those numbers by removing the "undecideds" and "don't want to tells" we get Clinton 49 per cent, Trump 46 per cent, and others at five per cent. If we remove the "other" votes and make it a simple two-way race, it's Clinton 52 per cent, Trump 48 per cent.

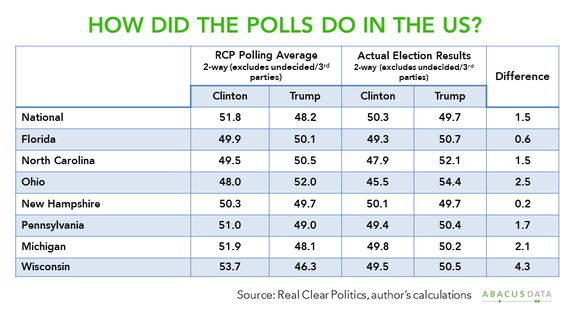

Using just the two-way race, I recalculated the final polling averages from RealClear Politics and the current election results in some of the key battleground states. Except for Wisconsin, where the polls clearly underestimated Trump's support, the polls did quite well in estimating the final vote margin between Clinton and Trump. Nationally, the difference was 1.5 points. The polls were particularly good in New Hampshire (0.2) and Florida (0.6). Estimates were less precise in Ohio (2.5) and Michigan (2.1). Except for Wisconsin (where the polls did underestimate Trump's support beyond what we should expect), these differences are reasonable given the limitations of survey research.

Let's take Michigan for example. Many, perhaps except for Michael Moore, were shocked that Trump won Michigan. But if we look at the average of the final polls in Michigan, Clinton lead, on average, by 3.8 points, barely outside the margin of error for most polls conducted in the state. The final three state polls in Michigan described the race as a five-point Clinton lead, a two-point Trump lead or a two-point Clinton lead. Two of three had the race within the margin of error. In the end, she lost by 0.3 points or about 12,000 votes.

Were Clinton's odds of winning the state higher than Trumps given the polls? Yes. Was her winning the state a certainty? No. A small shift in turnout or an error in the poll estimates could have easily produced the outcome we saw and defied our expectations.

Follow HuffPost Canada Blogs on Facebook

Also on HuffPost: