A University of Toronto professor has a machine fastened to his eye that provides him with on-demand photographic memory. A colourblind spanish artist wears a forehead sensor that converts light into sound and transmits it to his sensitive inner ear, where he "hears" colour. An Iraq veteran who lost his sight to a rocket-propelled grenade senses objects around him through ripples he feels on his tongue. And perhaps most impressive, a woman paralyzed for 14 years imagines a robot arm handing her a drink -- and a chip in her brain tells it to do just that.

The boundary between human and machine is softening. The first cyborgs have emerged -- much sooner than scientists would have predicted 30 years ago.

The secret to cybernetic success is that because of the brain's adaptability, we don't need to fully understand it to attach hardware to it. When Neil Harbisson -- the Spanish artist -- approached university student Adam Montandon about a visual aid that would let him hear colours, Montandon put a device together in two weeks with £50 and a bit of tape. And yet, Harbisson's brain adapted.

The first prototype was noninvasive, like a hearing aid, and could identify 16 different hues. At first he had headaches from all the ambient noise (and if you watch his TED talk and hear the sounds he listens to, you'll get a headache too), but after a few months of training, he began to differentiate the tones he was hearing and associate them with objects, like "green" and "grass." Eventually, the prototype was replaced with an implanted chip that transmits directly to his inner ear via bone conduction. With the more sensitive device, he can hear 360 hues, including some that aren't in the human hearing range.

You might expect that Harbisson has to actively listen to the tones he's hearing to understand them, the way you would have to consult a guidebook after seeing a Mandarin street sign. But as Harbisson learned to use his device, interpreting sounds became an automatic process. He learned to think in colour, the way a non-native speaker learns to think in Mandarin.

Neuroimaging studies have shown that when blind patients use cybernetic visual aids, they "recruit" the parts of their brain that are used (in able-sighted people) to process vision. So although Harbisson receives colour as sound, his brain knows to process it as sight.

And it's not an isolated phenomenon. Craig Lundberg, the British Iraq vet, uses a cybernetic aid based on the same principle, but using touch instead of sound. His machine converts incoming light into signals on a "lollipop" inside his mouth (which he says "feels like licking a nine volt battery,") and again it's the visual centres of his brain that process the information. He thus "sees" the world through the tactile nerves on his tongue.

The brain sorts sensory input, not based on where it comes from, but based on what kind of information it is. To understand how remarkable that is, consider an analogy: I think we would all be impressed with a stereo system that could play any format of disc or drive imaginable, so long as it contains something recognizable as music.

The practical upshot is that despite what early cyberneticists expected, sensory augmentation devices work out-of-box, no installation required. We don't need to plug a visual prosthetic directly into the visual part of our brains -- the device can be plugged in anywhere, and the brain will do the rest.

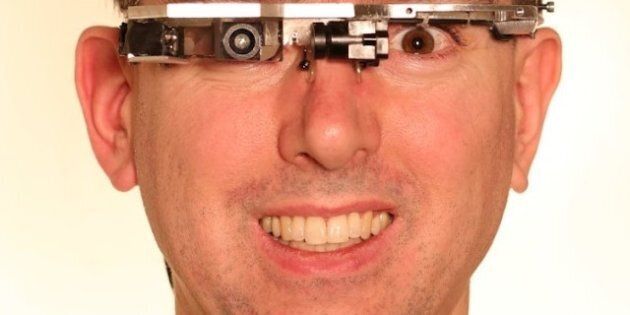

There's a corollary. We used to think cybernetic devices were distinguished from wearable computers by invasiveness. Having a device implanted in your skull made you a cyborg; wearing a pair of digital glasses did not. But to the brain, the distinction is arbitrary. Lundberg's non-invasive device and Harbisson's invasive one are both just sensors transmitting signals, and the brain will treat them just like an extra set of eyes or ears or fingertips.

In the same vein, it might not be accurate to think of sensory augmentation devices -- like Google's Project Glass -- as just a wearable version of a mobile phone or computer. If your brain is receiving constant input from the glasses, the way it does from your senses, it may recognize and adapt to the glasses the way it does a cybernetic device. You begin thinking in Google the way Harbisson thinks in colour.

Then we'll really have to answer the question: where does "me" end, and "my machine" begin?